Compiler Engineer

Expertise: Machine Learning, XLA, CPU and GPU programming.

Expertise: Machine Learning, XLA, CPU and GPU programming.

Objective:

Build and train a basic character-level Recurrent Neural Network (RNN) to classify words from scratch showing how preprocessing

to model NLP works at a low level. The character-level RNN reads words as a series of characters, outputting a prediction and “hidden state”

at each step, feeding its previous hidden state into each next step. It takes the final prediction to be the output, i.e. which

class the word belongs to.

Specifically, it train on a few thousand surnames from 18 languages of origin, and predict which language a name is from based on the spelling

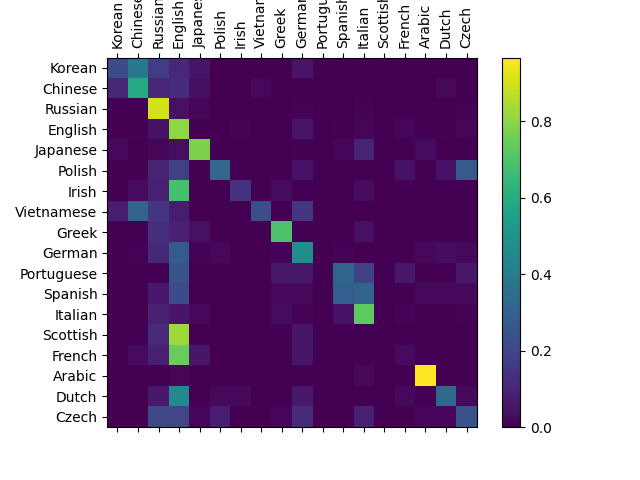

A confusion matrix is used To see how well the network performs on different categories, indicating for every actual language (rows) which language the network guesses (columns).

Objective:

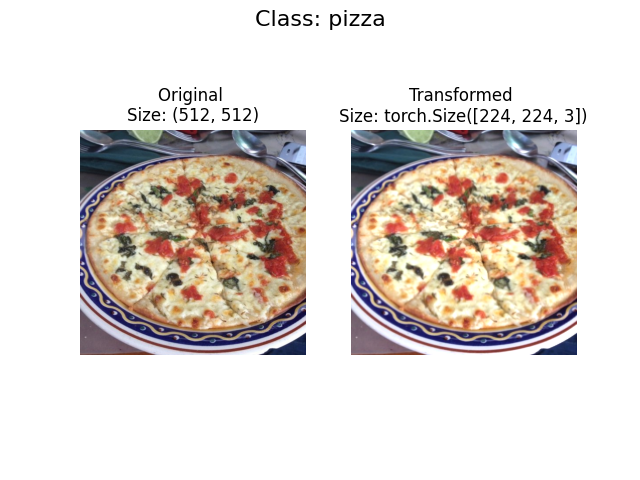

Using torchvision.datasets as well as a custom Dataset class to load in images of food and then

build a PyTorch computer vision model to be able to classify them.

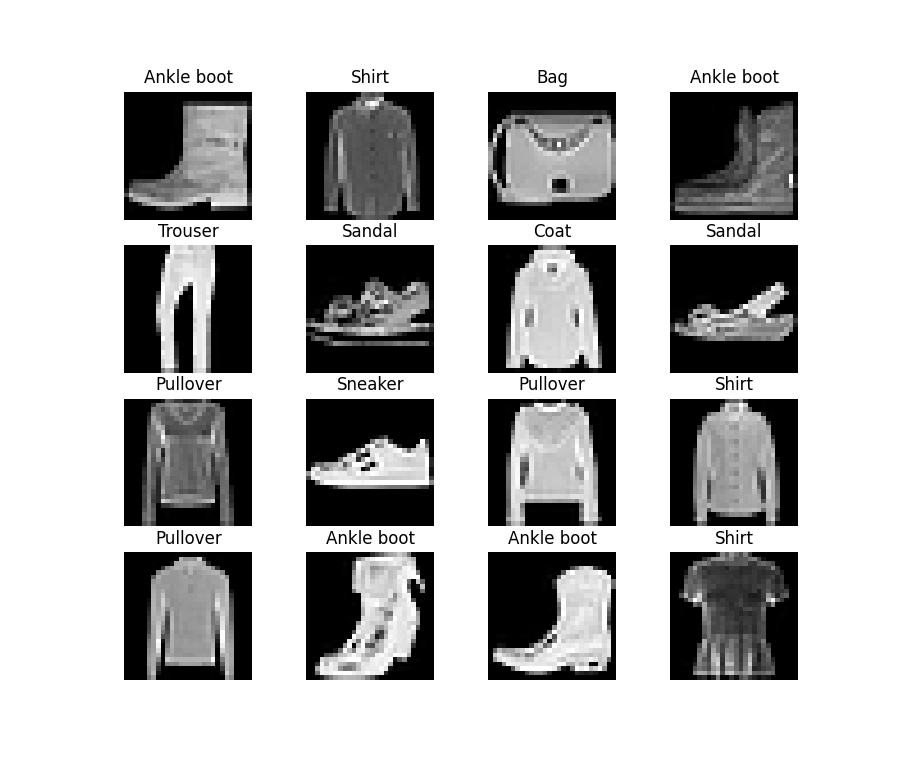

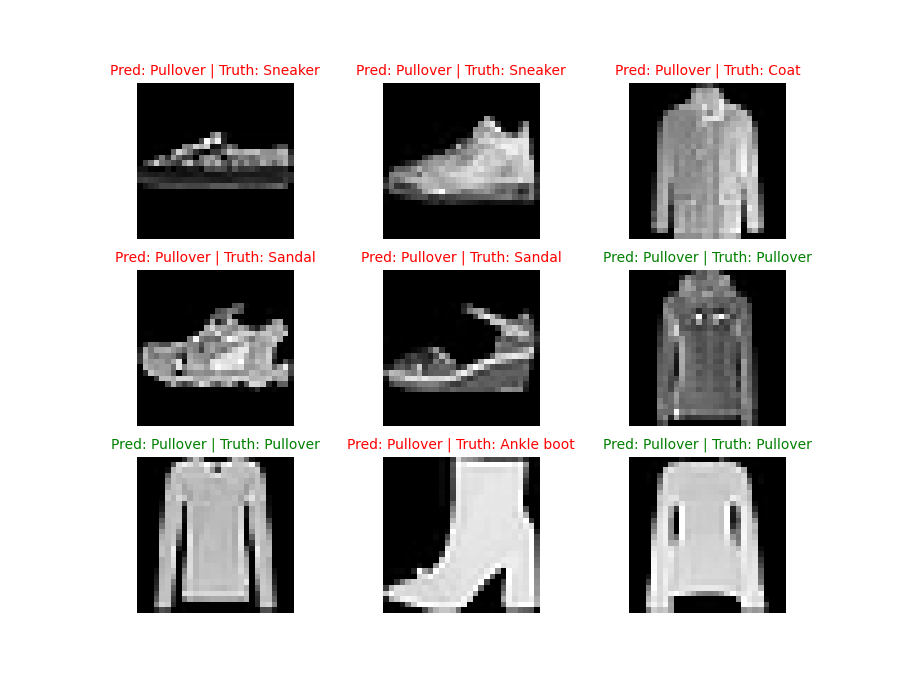

Objective:

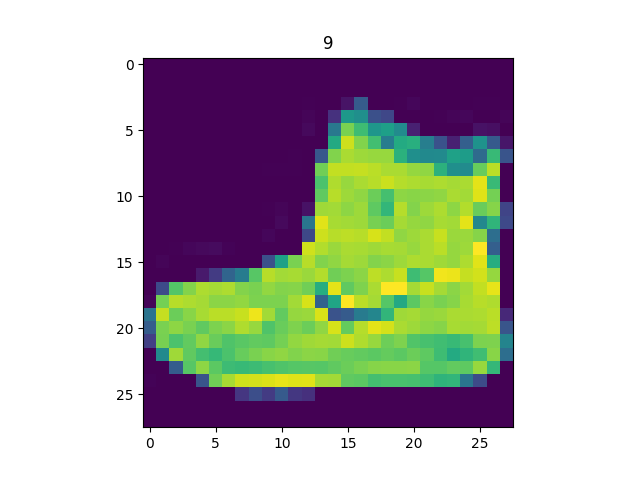

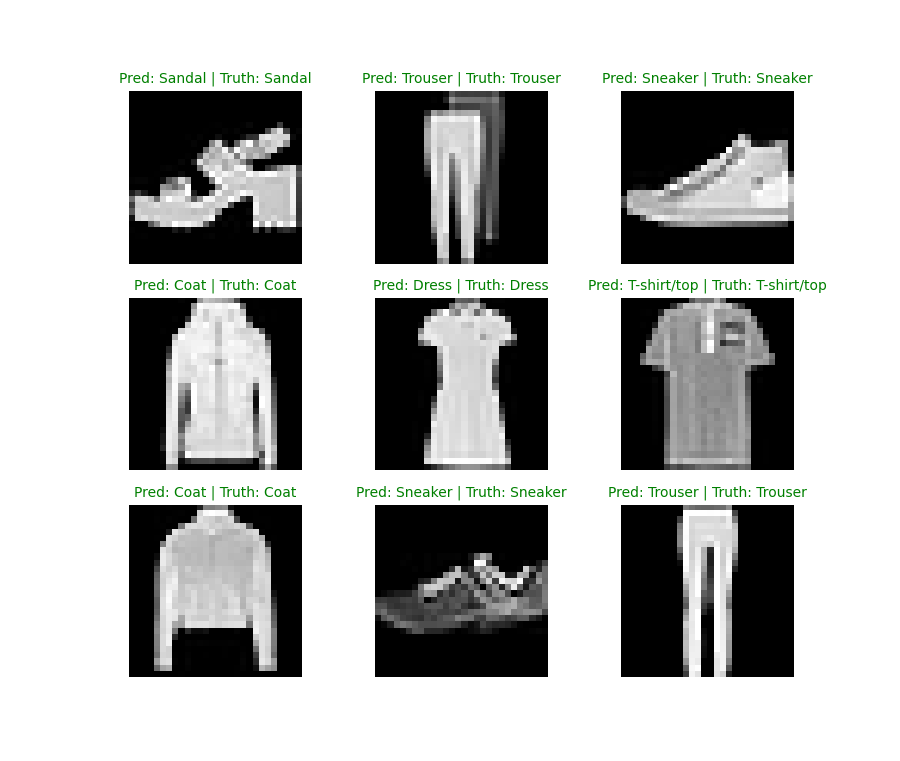

A computer vision neural network to identify the different styles of clothing in images. The essence is taking pixel values and building a model

to find pattens in them to use on future pixel values.

Key Features:

This project uses images of different pieces of clothing from the FashionMNIST dataset. The images are loaded with Pytorch Dataloader to

be used in a training loop. Multiclass classification model has been used to learn patten in the data while nn.CrossEntropyLoss() loss function,

and an SGD optimizer was used to build a trainign loop. Mini-bactches of 32 was used for gradient descent to perform more often per epoch one per

mini-batch rather than once per epoch. A baseline of 2 nn.Linear() layers was used as a starting point with nn.Relu() non-linear functuon and

improved upon with subsequent,

more complicated models. nn.Flatten() was used to compress the dimensions of a tensor into a single vector.

Convolutional Neural Network from TinyVGG was used to improve upon the baseline, nn.conv2d() as the convolutional layer, and nn.MaxPool2d() for max pooling layer.

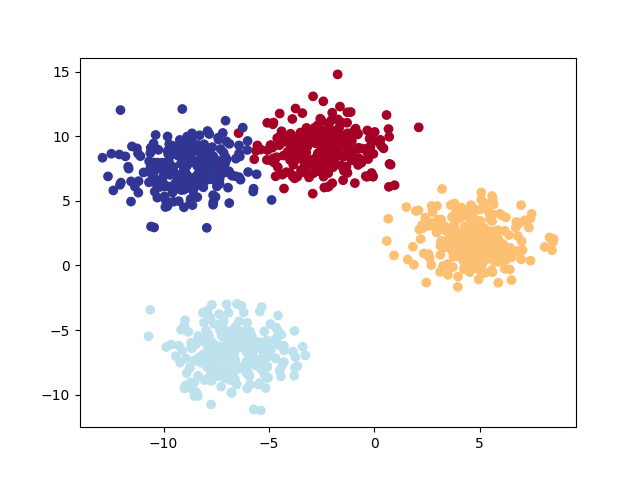

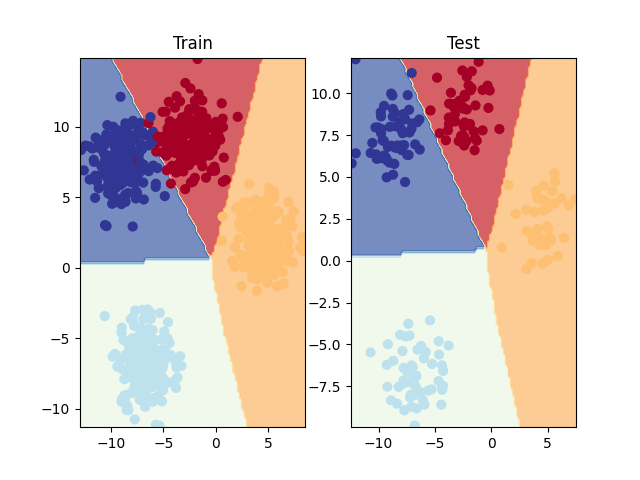

Objective:

Classifying something from a list of more than two options (e.g. classifying a photo as a cat a dog or a chicken)

Key Features:

Classification data: Leverage Scikit-Learn's make_blobs() method. This method will create however many classes (using the centers parameter).

Turn data into tensors: Turn the data into tensors (the default of make_blobs() is to use NumPy arrays).

Split data into training and test sets: Split the data into training and test sets using train_test_split().

Buil a multi-class classification model: Subclass nn.Module that takes in three hyperparameters: input_features - the number of X features coming into the mode,

output_features - the ideal numbers of output features, and hidden_units - the number of hidden neurons for each hidden layer to use.

Set up device agnostic code for the model to run on CPU or GPU if available.

Loss and activation function with optimizer: Use the CrossEntropyLoss() method as loss function and

Stochastic Gradient Descent for optimizer with a learning rate of 0.001 for optimizing, and softmax for activation.

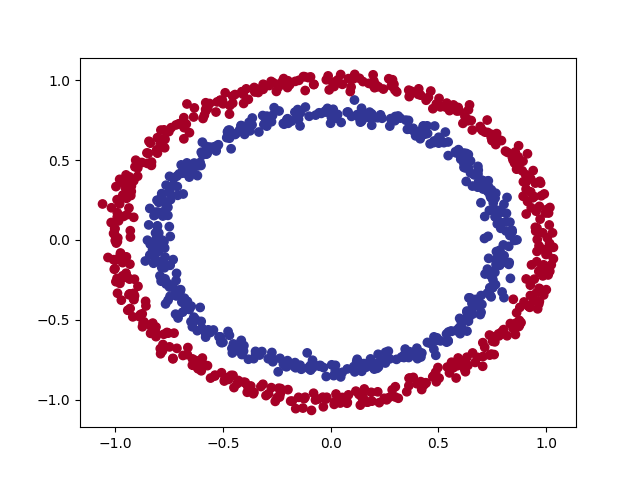

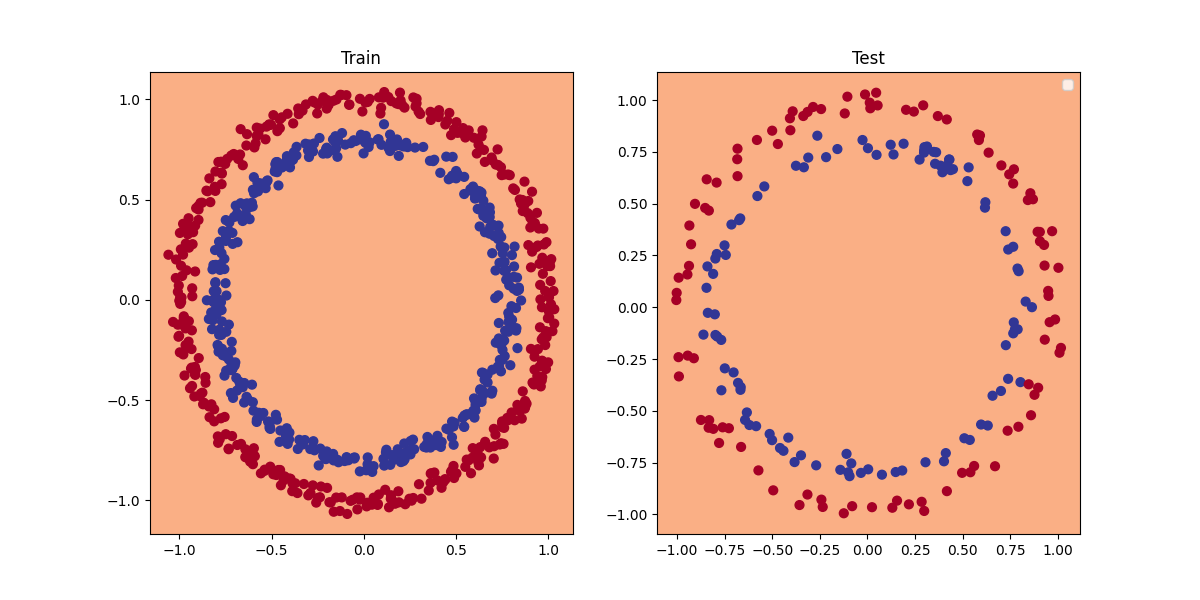

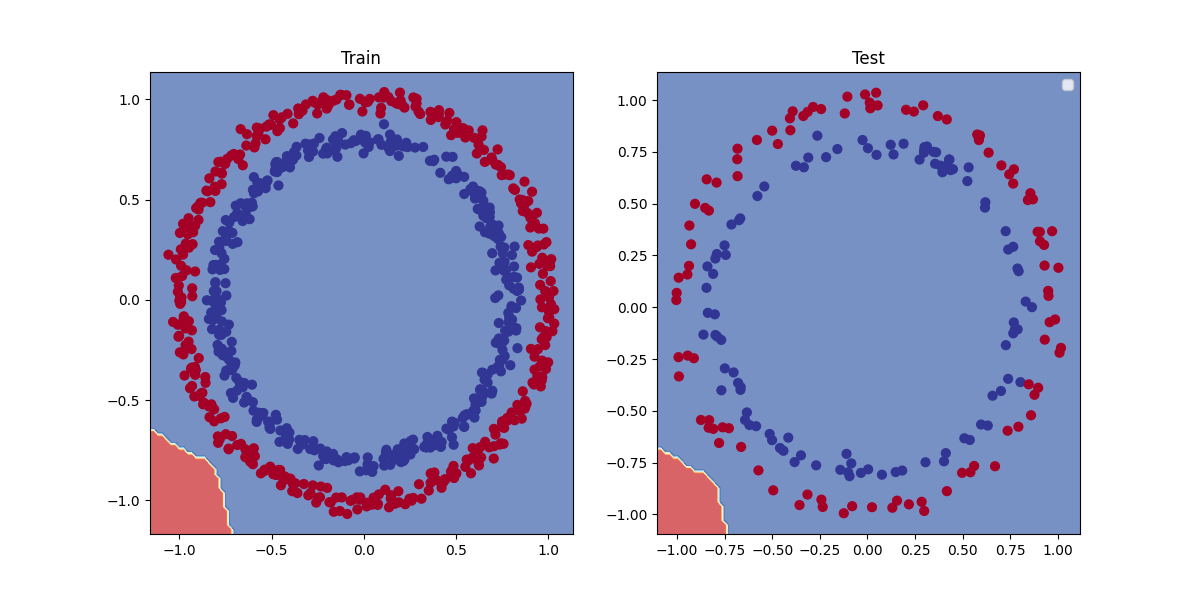

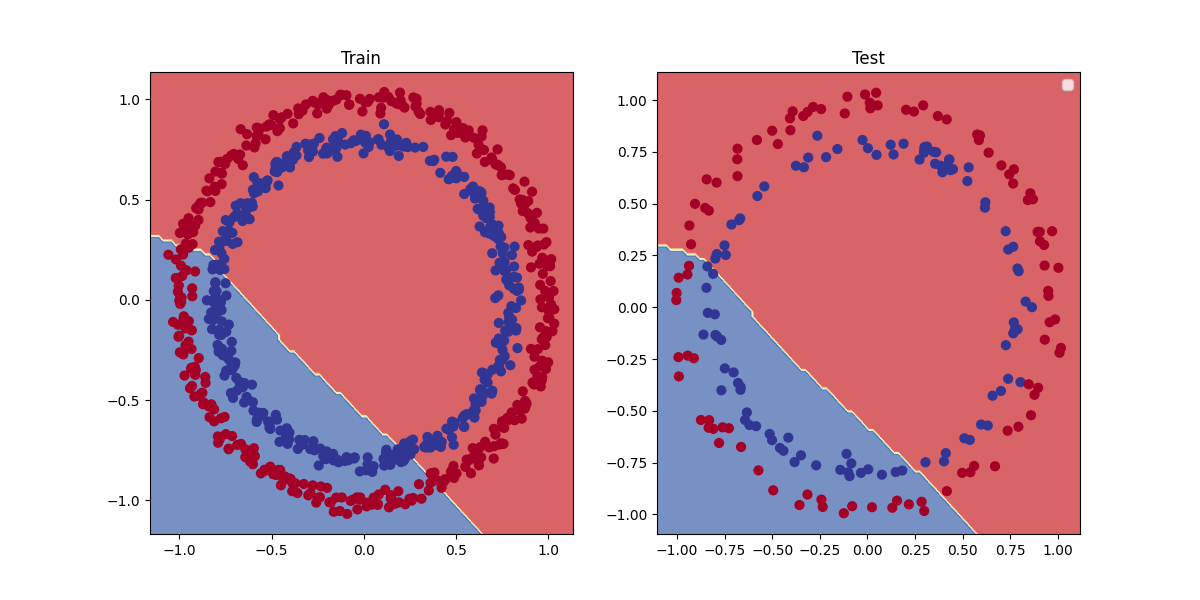

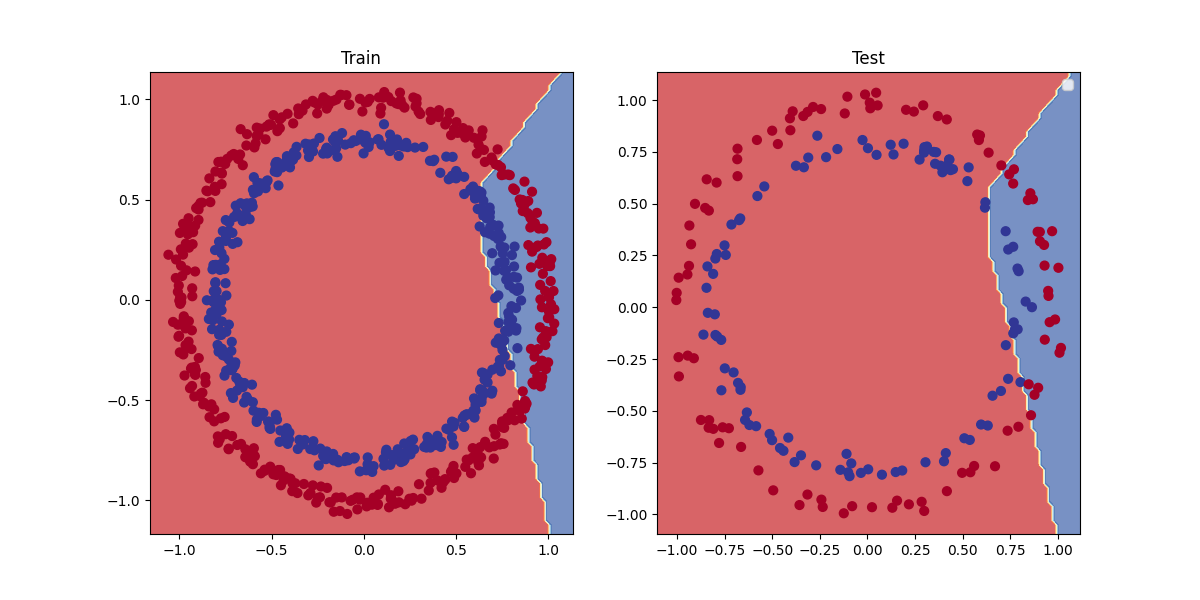

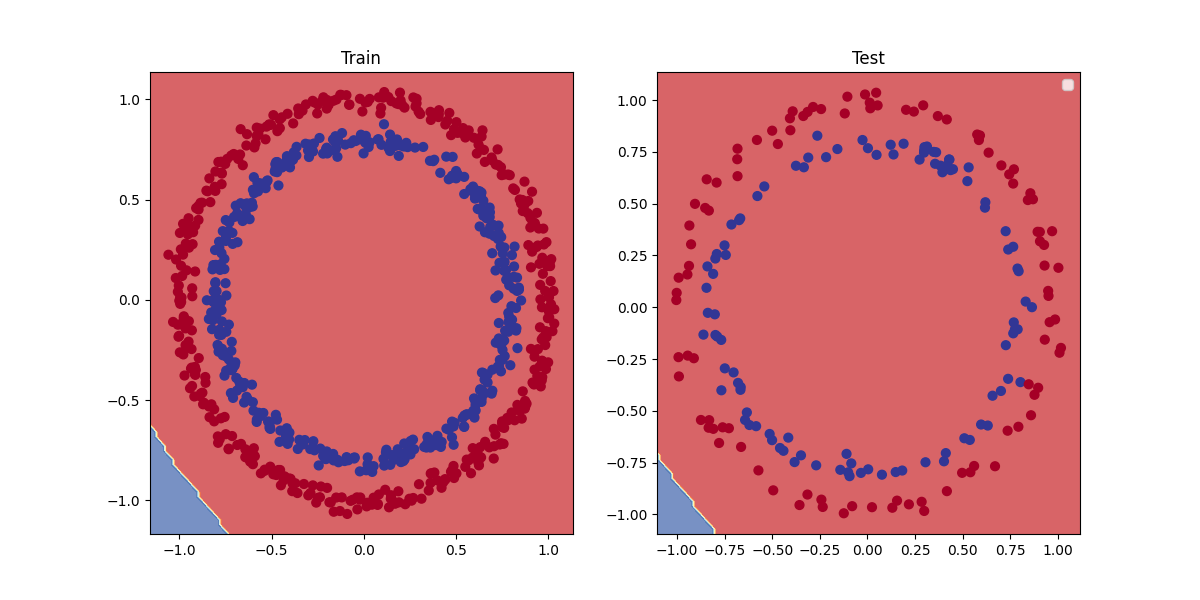

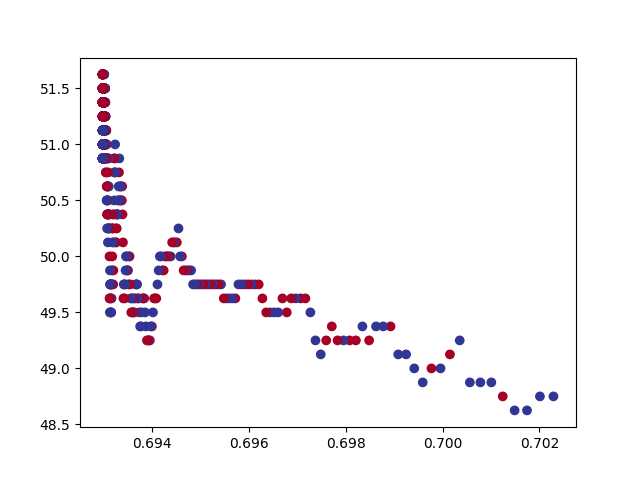

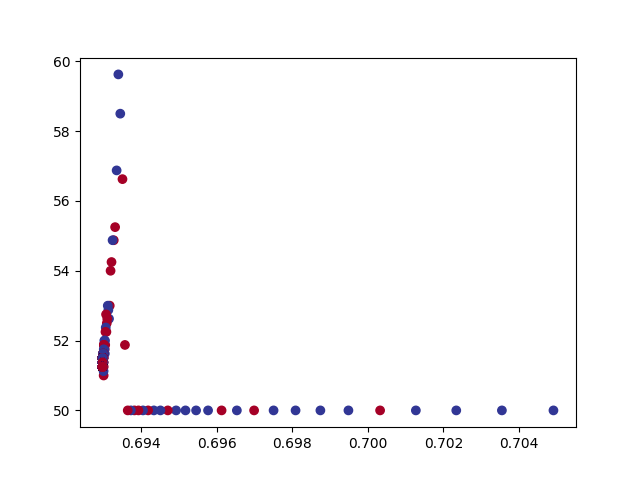

Objective:

A PyTorch neural network to classify dots into red (0) or blue (1).

This dataset is a toy problem that's used to try and test things out in machine learning. But it represents

the major key of classification, where data is represented as numerical values which is used to build a model

that's able to classify it, separating it into red or blue dots.

Key Features:

Turn data into tensors: Turn NumPy arrays to PyTorch tensors

Split data into training and test sets: Train a model on the training set to learn the patterns

between X and Y and then evaluate those learned patterns on the test dataset. (80% training, 20% testing)

Build a model: Set up device agnostic code for the model to run on CPU or GPU if available.

Constructed a model by subclassing nn.Module, defind a loss function and optimizer, and create a training loop by

adjusting them so they work with the classification dataset. *here i used Binary cross entropy

loss beacuse it has a sigmoid layer built-in, and Stochastic Gradient Descent for optimizer"*